The Agency Field Map: Sense-Making, AI, and the Future of Work

Being less wrong about the future requires more careful analysis of the present

In my last post I introduced the Synthetic Futures Canvas—a framework to generate more nuanced and introspective conversations about the future.

I provided an example using the future of work, where AI took up about 25% of the space allocated for emerging signals. AI is everywhere, all at once. This makes it challenging to make sense of the current environment, given the incredible volume of new developments, the urgency of the discourse, and the rate of change. Even more, this disorientation is exacerbated by competing narratives and unreliable narrators amid a dysfunctional information ecosystem.

There is no single source of truth.

So many leaders turn to analyst reports and deterministic predictions about the future. These tell you something about which technologies and applications are trending and on what trajectory (based on varied and often unspecified assumptions). However, they fall short of providing a coherent theory of change or offering sufficient insight to inform strategic choices or prospective actions amid uncertainty. And most of what you read about the future—especially if it makes a prediction rather than expressing a contingent range of outcomes—is likely wrong.

A better approach for complex environments is sense-making in context—in particular, improved reasoning about systems through an analysis of actors, actions, and stories in relation to incentives and constraints.

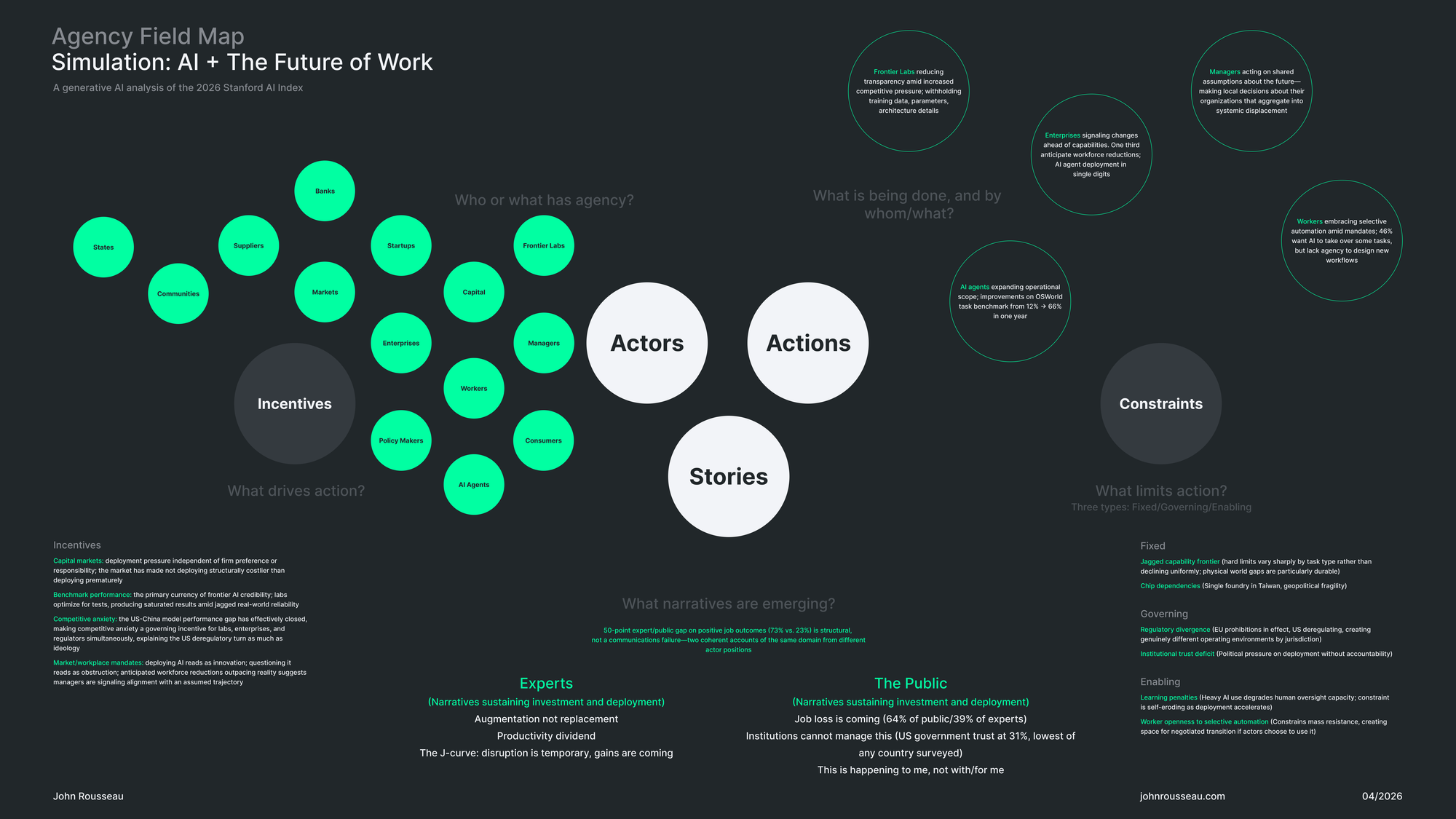

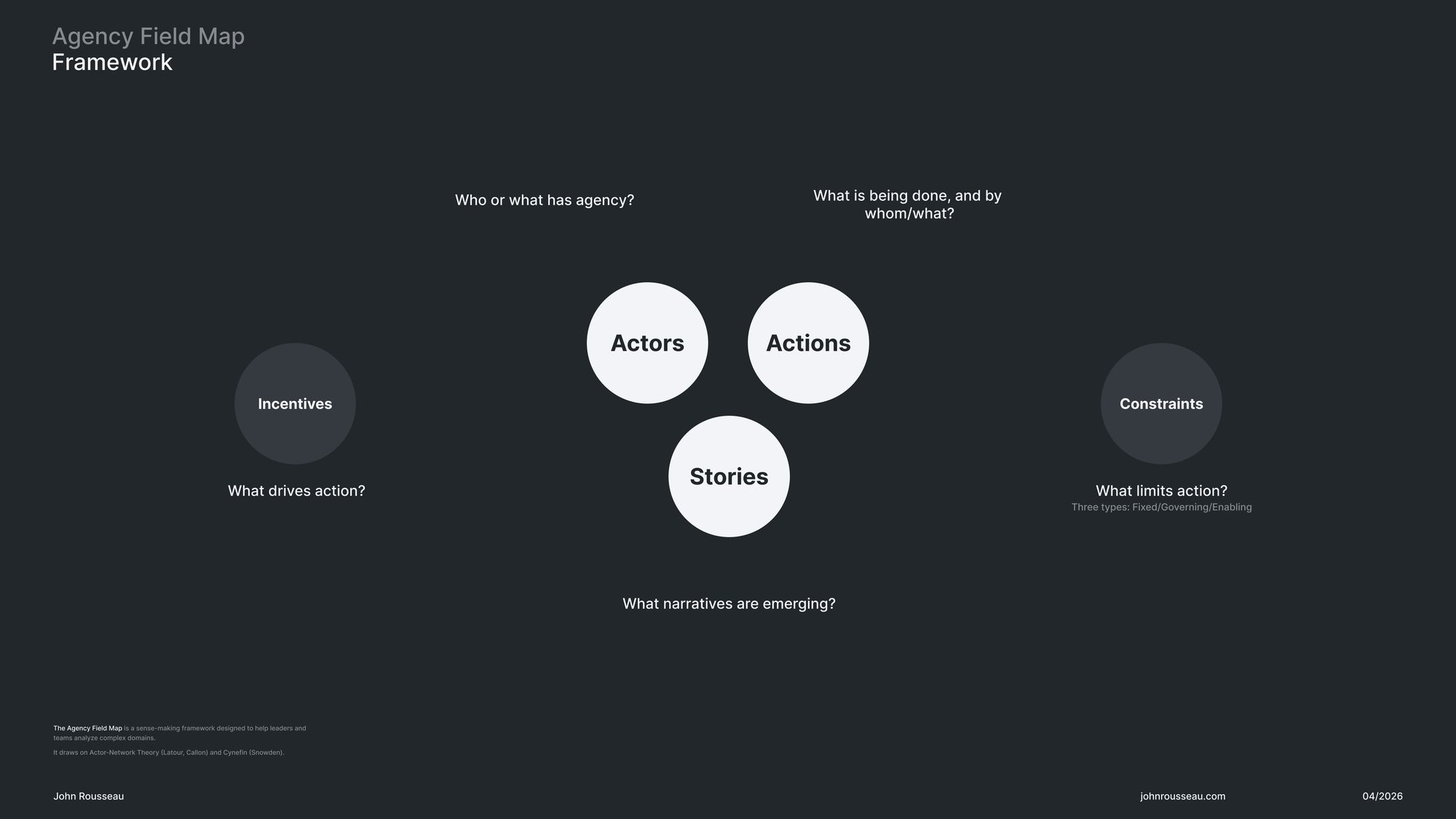

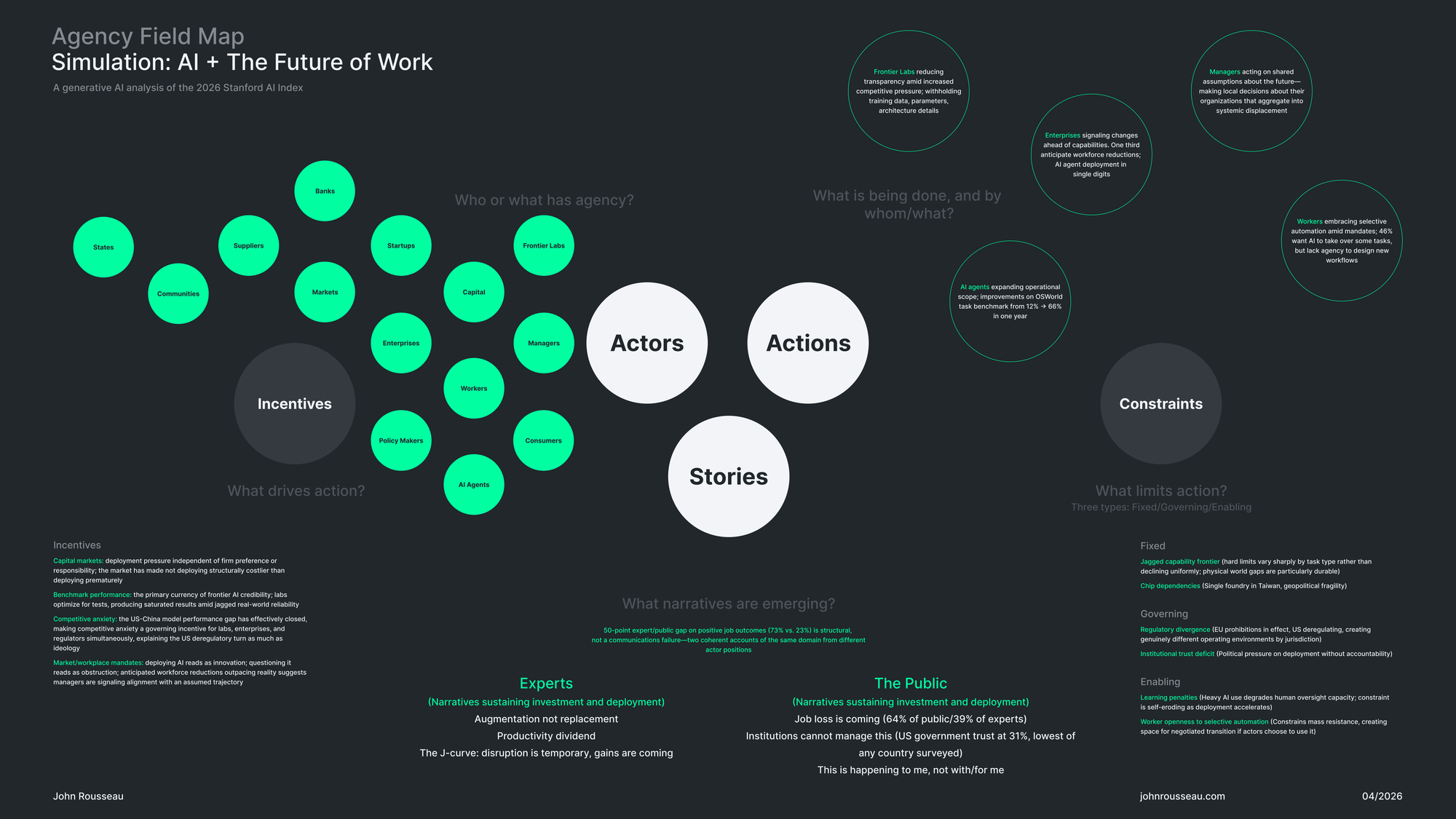

The Agency Field Map (AFM)

I developed this framework based on a need to understand the relational dynamics of an issue—a way to analyze complex domains with emergent and non-linear causality. It draws on ideas from Actor-Network Theory (Callon/Latour) and Cynefin (Snowden) and introduces a unique synthesis and simplified analytical structure optimized for facilitation in groups. It fits in my emerging toolkit along with the Synthetic Futures Canvas as part of a flexible and hybrid approach to applied foresight and strategic design in complexity.

The framework structures analysis around five nodes, each representing a distinct dimension of systems change—and in a specific order.

Core Nodes: Actors, Actions, and Stories

Actors: Who or what has agency?

Analysis begins with mapping who or what has agency in the system. Actors can include humans (people, groups, movements), organizations, markets, and states, as well as non-humans (e.g., autonomous technologies, non-human animals, ecologies, etc.).

Actions: What is being done, and by whom/what?

Actions are behaviors, interactions, or signals of change in the environment that can be attributed to human or non-human actors. Actions also imply objects—stakeholders or systems affected by those actions.

Stories: What narratives are operating?

Stories are implicit or explicit narratives present in the domain. Stories are both inputs and outputs—they simultaneously reflect and shape behavior, and reveal the values, worldviews, and politics of actors.

Peripheral Nodes: Incentives and Constraints

Incentives: What drives action?

Incentives are structural factors that make certain actions more rational, rewarding, or likely than others (e.g., business models, metrics, rewards, risks, etc.)

Constraints: What limits action?

There are three types of constraints—fixed, governing, and enabling (Snowden). Fixed constraints are hard limits on what is possible. Governing constraints are rules and policies that limit action without controlling what is possible. Enabling constraints are conditions that create space for certain actions without controlling outcomes.

Simulation: The Future of Work and AI

To create an illustrative example, I asked Claude to run a simulation using the recent 2026 Stanford AI Index Report 2026 as a data source. The simulation followed the AFM structure and order of operations, and focused on “AI impacts to knowledge work and employment” as a specific issue. I also provided additional background on the theoretical foundations, practical context, and intent of the Agency Field Map. The goal of the exercise was to evaluate the prototype and understand whether the schema and order of operations could yield insight beyond what a direct reading of the source material would produce.

A caveat

The AFM framework is designed as a collaborative sense-making tool. This simulation and resulting artifact is not presented as authoritative or as a recommended way of working. Using the Stanford report—independently authored by a large multidisciplinary team—approximates reasoning across diverse perspectives, given the obvious limitations of working with a static document and generative AI. I edited the output for greater clarity and concision.

The jagged frontier

The simulation was useful insofar as it helped validate the logic of the framework with a real dataset. The quality of the analysis was uneven—I worked through five iterations with the AI, checking citations and tuning the prompt each time. For example, I had to clarify that the output should not simply reiterate points from the executive summary in a different form, or conversely elevate obscure ideas as significant patterns. Even so, the summary point about divergent narratives between experts and the public remains in the mix, and is also the most common claim I have seen in coverage about the report. Make of that what you will. Fittingly, the experiment was an example of what the report calls the jagged frontier—increasingly sophisticated models that do well on benchmarks but poorly in real-world applications.

What Makes the Agency Field Map Unique

Who or what has agency?

The inclusion of multiple types of actors—both human and non-human—is intended to deepen our understanding of interconnected systems across varying levels of granularity. The focus on who or what has agency makes system dynamics legible in ways that a trend like “AI adoption reached 88% of organizations” does not. This design decision contextualizes change where it matters—improving our ability to reason about the disposition and trajectory of the system. Agency also suggests responsibility, particularly in terms of who or what are the objects of given actions.

In the example of AI and the future of work, increasingly autonomous agents introduce new non-human actors into the mix, alongside markets and in relation to specific incentives and constraints. For example, capital markets create path dependency by making responsible deployment costly. This makes it easier to see how incentives related to speed and the race to dominate markets pushes AI agent development ahead of responsible governance and regulatory action, which the Stanford report cites as lagging behind. Meanwhile, the objects of these agents—workflows, jobs, and ultimately people—are generally depicted as abstractions without a clear stake in the future.

Stories as inputs and outputs

The AFM framework holds that narratives are both shaped by the environment as well as instrumental in shaping it. Stories are not singular, but rather a lattice of interconnected ideas, some old and some new. In the Synthetic Futures Canvas, this distinction is made by referring to deep stories as those inherited from the past, and emerging stories as those that inform what is possible across alternative futures. The Agency Field Map collapses the distinction, and is oriented at the present.

The Stanford report reflects the baseline optimism and deterministic view of progress of its authors and more broadly the expert class and the institutional context in which they operate. They note a 50-point gap between expert opinion and public opinion, with 73% of experts expecting positive outcomes vs. 23% of the public. The narrative landscape can be analyzed along this divide—the implication being that perceived and actual agency is more downstream of faith than evidence. Thus, actions like deploying AI ahead of demonstrated maturity (the “J Curve” story) simultaneously reflect beliefs about the future (“inevitability,” “progress,” “augmentation”) and experiences in the present (“mixed signals,” “low adoption rates,” “low trust,” etc.).

How to influence action—obliquely

Because stories are easy to see, it is tempting to change the system by influencing the narratives and/or underlying worldviews of the actors. For example, by encouraging technology companies to adopt more responsible practices or by directing public opinion toward alternative images of the future. This is easier imagined than accomplished, because direct interventions focused on actors, actions, or stories do not produce predictable or stable outcomes in a complex adaptive system.

An alternative is to focus on oblique strategies—designing interventions at the periphery, focused on the incentives and constraints that drive or limit action. Different incentives create different behaviors, and different constraints make certain actions more or less likely than others.

The simulation identified a key enabling constraint for the future of work: learning penalties associated with AI automation. Specifically, as AI is deployed for tasks that require “deeper reasoning and judgement” and oversight (e.g., in complex software development), human capabilities atrophy—neutralizing or reversing productivity gains. The constraint is self-degrading: because capacity erodes gradually and invisibly, measurable impacts will not appear until much later. And by that time, the space for intervention will have narrowed considerably or possibly closed altogether. If the industry is serious about augmentation over replacement, better outcomes will be realized by increasing incentives for responsible development on one hand and more intentional constraints regarding human oversight on the other.

Sense-making in the present

My goal with this framework is to add greater depth to Synthetic Futures Canvas—so that in combination it is possible to re-frame our understanding of the present on the way to different actions and preferable futures. The Canvas is primarily a visioning tool—meant to identify the sorts of transformation stories we want amid complexity, uncertainty, and the inheritance of the past. The Agency Field Map adds analytical nuance and new affordances for action. Nuance in terms of structured analysis across the core triad of actors, actions, and stories, and new affordances in terms of the incentives and constraints that might be used to design interventions that influence the disposition and trajectory of the system.

As always, this is work in progress.